AI Cluster Runtime

Tooling for optimized, validated, and reproducible GPU-accelerated Kubernetes.

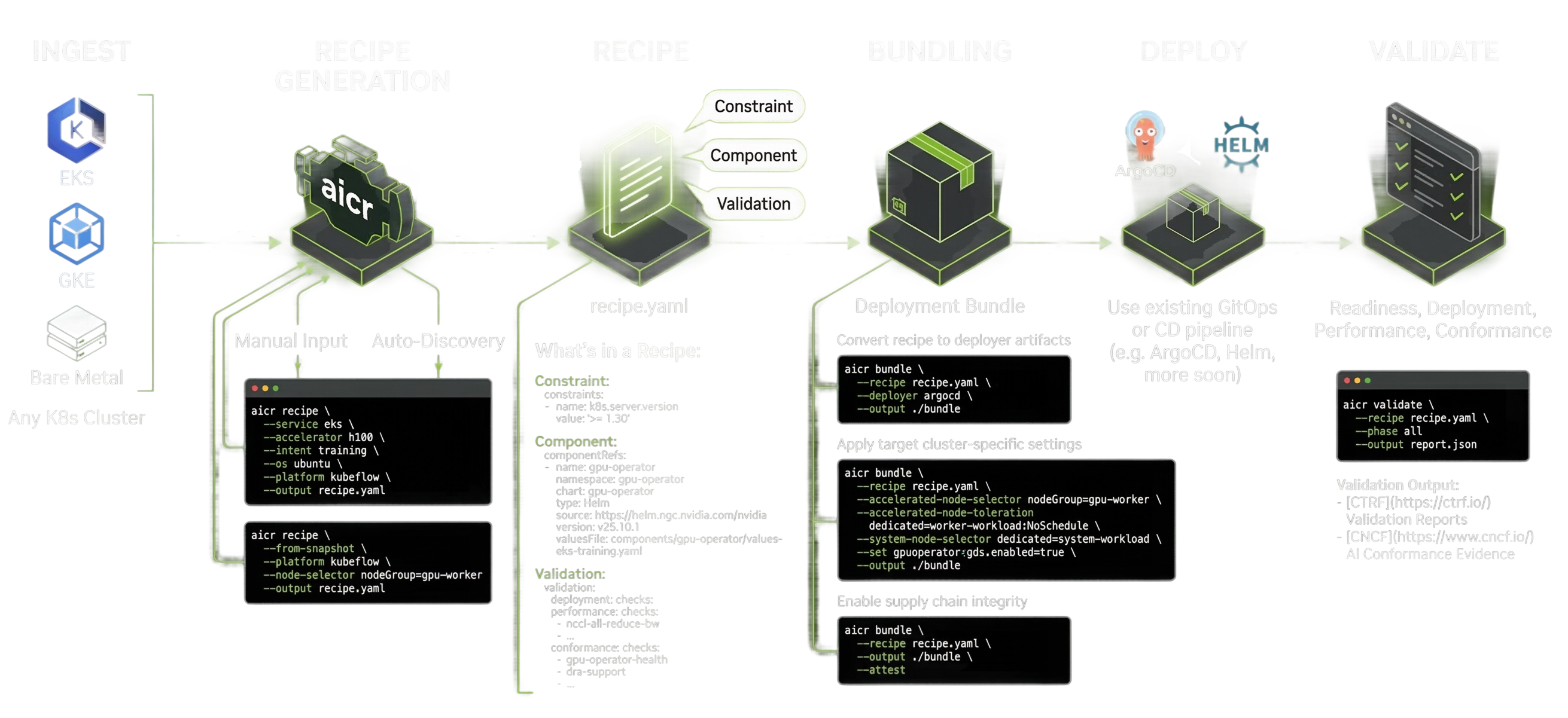

AICR makes it easy to stand up GPU-accelerated Kubernetes clusters with version-locked recipes you can deploy anywhere.

Running GPU-accelerated Kubernetes

clusters reliably is hard.

Small differences in kernel versions, drivers, container runtimes, operators, and Kubernetes releases can cause failures that are difficult to diagnose and expensive to reproduce.

Historically, this knowledge has lived in internal validation pipelines and runbooks. AI Cluster Runtime makes it available to everyone.

Define a Working GPU Kubernetes Cluster in Minutes

You describe your target (cloud, GPU, OS, workload intent), and AICR generates a version-locked configuration you can deploy through your existing pipeline.

Every AICR recipe is

Optimized

Tuned for a specific combination of hardware, cloud, OS, and workload intent.

Validated

Passes automated constraint and compatibility checks before publishing.

Reproducible

Same inputs produce identical deployments every time.

Composable

Recipes compose from layered overlays: base defaults, cloud, accelerator, OS, and workload-specific tuning.

Secure

SLSA Level 3 provenance, SPDX SBOMs, and Sigstore cosign attestations on every release.

Standards Based

Built on existing standards. Recipes are YAML, bundles produce Helm charts, and deployment works through Helm, ArgoCD, or any CD pipeline.

Configure any Kubernetes cluster.

AICR generates recipes for managed or self-hosted Kubernetes deployments. Current recipes are optimized for EKS, GKE, and self-managed clusters with H100 and GB200 GPUs on Ubuntu and COS. Support for additional environments and accelerators is on the roadmap.

Don't see your environment? Add it.

AI Cluster Runtime is Apache 2.0. We welcome contributions from CSPs, OEMs, platform teams, and individual operators: new recipes, bundler formats, validation checks, or bug reports.

Copy an existing overlay, update the criteria and component configuration, run make qualify, and open a PR.

Quick Start

Install and generate your first recipe in under two minutes.